Imagine your library rack is perfectly arranged. One day, you keep adding more books in the rack. Slowly, shelves start overflowing. You push things left, right, top, bottom… but nothing fits anymore.

At that moment, you do not “adjust.” You reorganize the entire library rack. That idea is called Rehashing. Means in Rehashing

1. Make the library rack bigger

2. Reorganize the entire rack from zero.

.

Now imagine instead of buying a bigger library rack, the rack magically grows new sections only where needed. That idea is called Extendible Hashing. Do not reorganize the entire rack from zero.

Both are advanced collision handling techniques used when simple probing or chaining is not enough.

Let us understand both in a simple and clear way.

.

What is Rehashing?

“Rehashing means creating a bigger hash table, and inserting all the elements again, to reduce collisions and improve performance.”

Rehashing is a technique used when the load factor of a hash table becomes too high.

Instead of handling more collisions in the same table, we –

- Create a new larger hash table

- Use a new hash function (or same function with new table size)

- Reinsert all existing elements

.

When Do We Perform Rehashing?

Usually when load factor α > 0.7 (or threshold value). This threshold is chosen because after this point, collisions increase rapidly.

Algorithm – Rehashing

- Monitor load factor α = n/m

- If α exceeds threshold:

- Create new table of size 2m (or next prime number)

- For each element in old table

- Compute new hash value

- Insert into new table

- Replace old table with new table

.

Example

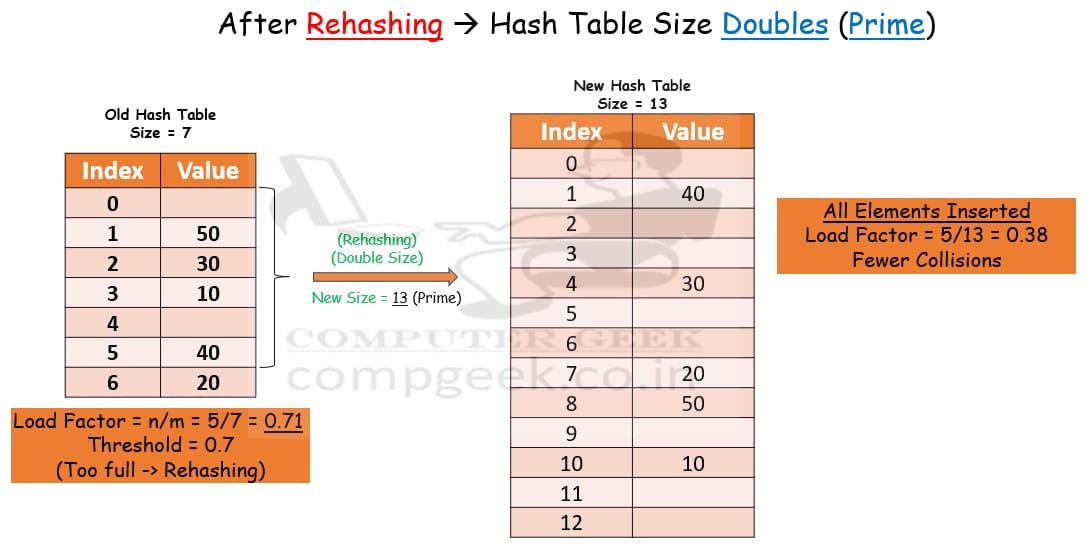

Suppose table size is m = 7 and you have to insert keys – 10, 20, 30, 40, 50

Load factor = 5/7 = 0.714

If threshold = 0.7, rehashing is triggered.

Now new table size = 13

We reinsert all elements using new hash function (k mod 13). Old distribution changes and Collisions reduce. Also, the performance improves again.

.

Time Complexity – Rehashing

Rehashing Insertion – O(n) because all elements must be reinserted. However, this does not happen every time. So amortized time complexity of insertion remains O(1).

.

Space Complexity – Rehashing

Its space complexity is O(m), but temporarily, we need extra space for new table while copying elements.

.

What is Extendible Hashing?

Now we move one level higher. Rehashing rebuilds the whole table.

“Extendible Hashing grows dynamically without rebuilding everything. The Hash Table doubles when needed.”

Extendible Hashing is a dynamic hashing technique where –

- A directory is maintained.

- Directory size doubles when needed.

- Buckets split individually instead of resizing whole table.

It uses bits of hash value to decide bucket location.

Key Concepts

Global Depth – Number of bits used to index directory

Local Depth – Number of bits used by a bucket

When a bucket overflow

- If local depth < global depth → split bucket

- If local depth = global depth → double directory and then split

This is smarter growth.

Algorithm – Extendible Hashing

- Compute hash value of key

- Use first d bits (global depth) to find directory index

- Insert into bucket

- If bucket overflows

- If local depth < global depth

- Split bucket

- Redistribute elements

- Else

- Double directory

- Increase global depth

- Split bucket

- If local depth < global depth

Example

The numbers are 18, 16, 20, 7, 24, 10, 31, 7.

Bucket Size = 3

Decimal | Binary |

4 | 00100 |

16 | 10000 |

6 | 00110 |

22 | 10110 |

24 | 11000 |

10 | 01010 |

31 | 11111 |

7 | 00111 |

9 | 01001 |

Suppose global depth = 1 and local depth = 1

Directory has 2Global Depth = 21 entries

2 Entries are 0 and 1.

.

Insert 4

Binary Form = 00100

Lowest Significant of 00100 = 0

Global Depth = 1

Local Depth = 1

Bucket Size = 3

0 | 4 |

1 |

|

.

Insert 16

Binary Form = 10000

Lowest Significant of 10000 = 0

Global Depth = 1

Local Depth = 1

Bucket Size = 3

0 | 4, 16 |

1 |

|

.

Insert 6

Binary Form = 00110

Lowest Significant of 00110 = 0

Global Depth = 1

Local Depth = 1

Bucket Size = 3

0 | 4, 16, 6 |

1 |

|

.

Insert 22

Binary Form = 10110

Lowest Significant of 10110 = 0

Global Depth = 1

Local Depth = 1

Bucket Size = 3

0 | 4, 16, 6, 22 |

1 |

|

Overflow condition, If local depth = global depth → double directory and then split

Local Depth of 0 = 2, Global Depth = 2

Directory has 2Global Depth = 22 entries

4 Enteries are 00, 10, 01, 11

00 | 4, 16 |

10 | 6, 22 |

01 |

|

11 |

.

Insert 24

Binary Form = 11000

Lowest Significant of 11000 = 0

Global Depth = 1

Local Depth of 00, 10 = 2

Local Depth of 01, 11 = 1

Bucket Size = 3

Local Depth of 0 = 2, Global Depth = 2

00 | 4, 16, 24 |

10 | 6, 22 |

01 |

|

11 |

.

Insert 10

Binary Form = 01010

Lowest Significant of 01010 = 10

Global Depth = 2

Local Depth of 00, 10 = 2

Local Depth of 01, 11 = 1

Bucket Size = 3

00 | 4, 16, 24 |

10 | 6, 22, 10 |

01 |

|

11 |

.

Insert 31

Binary Form = 11111

Lowest Significant of 11111 = 1

Global Depth = 2

Local Depth of 00, 10 = 2

Local Depth of 01, 11 = 1

Bucket Size = 3

00 | 4, 16, 24 |

10 | 6, 22, 10 |

01 | 31 |

11 |

.

Insert 7

Binary Form = 00111

Lowest Significant of 00111 = 1

Global Depth = 2

Local Depth of 00, 10 = 2

Local Depth of 01, 11 = 1

Bucket Size = 3

00 | 4, 16, 24 |

10 | 6, 22, 10 |

01 | 31, 7 |

11 |

.

Insert 9

Binary Form = 01001

Lowest Significant of 01001 = 1

Global Depth = 2

Local Depth of 00, 10 = 2

Local Depth of 01, 11 = 1

Bucket Size = 3

00 | 4, 16, 24 |

10 | 6, 22, 10 |

01 | 31, 7, 9 |

11 |

.

Insert 13

Binary Form = 01101

Lowest Significant of 01101 = 1

Global Depth = 2

Local Depth of 00, 10 = 2

Local Depth of 01, 11 = 1

Bucket Size = 3

00 | 4, 16, 24 |

10 | 6, 22, 10 |

01 | 31, 7, 9, 13 |

11 |

Overflow condition, If local depth < global depth → split bucket

Local Depth of 01, 11 = 2, Global Depth = 2

00 | 4, 16, 24 |

10 | 6, 22, 10 |

01 | 9, 13 |

11 | 31, 7 |

Hence, extendible hashing complete.

.

Time Complexity – Extendible Hashing

Searching – O(1)

Insertion – O(1)

Worst case insertion – O(n) during splitting

Since splits are local, performance remains stable.

.

Space Complexity – Extendible Hashing

Extra space required for directory and buckets. So, directory size grows as 2global_depth.

.

Applications

Rehashing is used in

- Programming language hash maps

- C++ unordered_map

- Java HashMap

- Python dictionaries

Extendible Hashing is used in

- Database indexing

- File systems

- Large disk-based storage systems

- Distributed storage

Test Yourself

Q1- A hash table uses rehashing when load factor exceeds 0.75. If table size is 8 and 7 elements are inserted, what will happen?

Rehashing will not occur

Rehashing will occur

Table becomes full

Collision stops

Ans – (2)

Explanation – Load factor α = n/m = 7/8 = 0.875

Since α > 0.75, rehashing will be triggered.

Q2- During rehashing, what happens to the old hash values of elements?

They remain same

They are recalculated using new table size

They are deleted

They are stored separately

Ans – (2)

Explanation – When table size changes, hash values change. So every element must be hashed again and inserted into the new table.

Q3- Which operation has worst-case complexity O(n) in rehashing?

Searching

Deletion

Rebuilding the table

Hash calculation

Ans – (3)

Explanation – During rehashing, all elements are inserted again into the new table, so time becomes O(n).

Q4- Extendible hashing mainly uses which structure?

Linked list

Directory

Stack

Queue

Ans – (2)

Explanation – Extendible hashing maintains a directory that stores pointers to buckets.

Q5- In extendible hashing, directory size depends on

Number of keys

Bucket size

Global depth

Local depth

Ans – (3)

Explanation –

Directory size = 2^(global depth).

Q6- If global depth = 3, how many directory entries exist?

3

6

8

16

Ans – (3)

Explanation –

Directory entries = 2^3 = 8.

Q7- If a bucket overflows and local depth < global depth, then

Entire table is rebuilt

Directory doubles

Only bucket splits

Hash function changes

Ans – (3)

Explanation – When local depth < global depth, only that bucket splits.

Q8- Why must all elements be reinserted during rehashing?

Ans – Because the hash index depends on table size. When table size changes, hash values change, so elements must be hashed again.

Q9- Explain why extendible hashing is called dynamic hashing.

Ans – Because directory and buckets grow dynamically as more elements are inserted.

Q10- Why is extendible hashing more efficient than rehashing for large data?

Ans – Because it avoids rebuilding the entire table. Only the overflowing bucket and directory are modified, which reduces computation.