Collision Handling Techniques

Hashing looks magical at first. You give a key, and data appears in constant time. Feels like teleportation for information. But then comes the twist in the story.

“Two different keys land in the same index. This is called collision in hashing.”

.

Collision happens when two different keys produce the same hash index.

For example – If we use a hash function h(k) = k mod 7

like,

First number, 14 mod 7 = 0th index

Second number, 35 mod 10 = 0th index

Then, both keys want to go to index 0. But the array has only one slot at index 0. So what now? That situation is called a collision.

Collision is not a bug. It is natural. And it must happen in real systems.

.

Why Collision Happens

Collisions happen because –

- The hash table size is limited.

- The number of possible keys is very large.

- A hash function compresses a large key space into a small index space.

Even if your hash function is excellent, collisions are unavoidable. And this brings us to a beautiful mathematical idea.

.

Pigeonhole Principle – The Core Idea

The Pigeonhole Principle is a simple but powerful concept in mathematics.

It says –

“If you have more pigeons than pigeonholes, at least one pigeonhole must contain more than one pigeon.”

That is, if you insert 11 keys into a hash table of size 10, at least one index must store more than one key. This is not bad luck. It is mathematics. Collision handling techniques are simply smart ways to manage this reality.

.

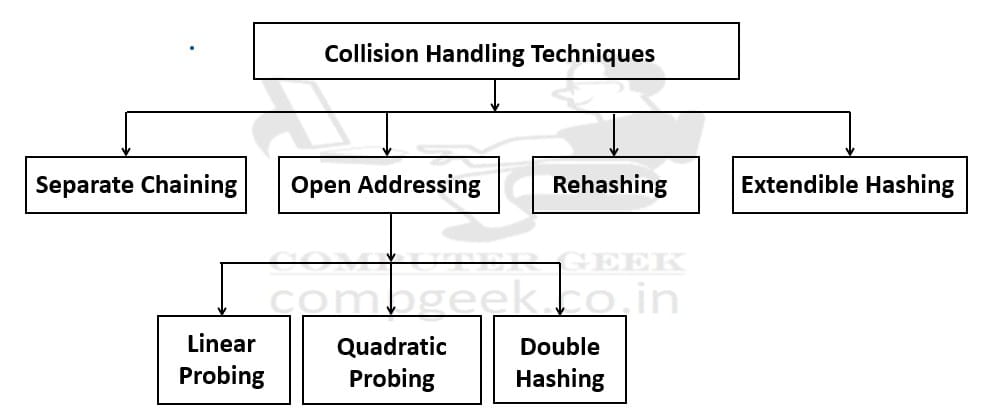

Collision Handling Techniques

Can be classified as –

In this post, we focus deeply on Separate Chaining, also called Closed Addressing.

.

Separate Chaining – Closed Addressing

“Separate Chaining is a collision handling technique where each index of the hash table stores a linked list. If multiple keys map to the same index, we store them in that linked list. Instead of fighting over one seat, they sit in a small queue.”

.

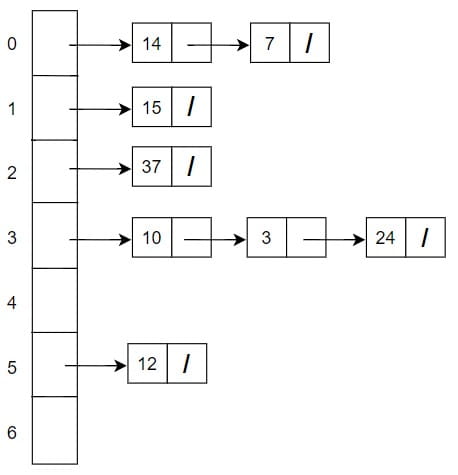

Input is = {10, 15, 3, 12, 14, 24, 7, 37}

Suppose that hash function is h(k) = k mod 7

Also, we have a linked list method in each of the index of the hash table. Linked list is called a bucket and the bucket of index 0 is a separate chain to the index 1.

Linked List Method

Each array index is called a bucket and each bucket contains a linked list. If keys 14 and 7 both map to index 7, then

Index 0 → 14 → 7

Both values are stored safely. No overwriting. No panic.

But the insertion, searching and deletion has a big impact, their worst-case time complexity is O(n).

.

1. Insertion in Separate Chaining

Steps –

- Compute hash index.

- Go to that index.

- Insert the key into the linked list at that bucket.

Insertion is usually done at the beginning of the linked list because it is faster.

Time complexity for insertion –

- Best case – O(1)

- Average case – O(1)

- Worst case – O(n) if all keys go to same bucket

But with a good hash function and proper table size, average case remains constant.

.

2. Searching in Separate Chaining

Steps –

- Compute hash index.

- Go to that bucket.

- Traverse the linked list.

- Compare keys one by one.

Time complexity –

- Best case – O(1)

- Average case – O(1 + α)

- Worst case – O(n)

Here α (alpha) is called load factor.

Load Factor = Number of elements / Table size. If load factor is small, searching is very fast.

.

3. Deletion in Separate Chaining

Steps –

- Find the bucket using hash function.

- Traverse the linked list.

- Remove the node.

Deletion time complexity –

- Best case – O(1)

- Average case – O(1)

- Worst case – O(n)

Linked list makes deletion flexible and easy.

.

Advantages of Separate Chaining

- Easy to implement.

- Simple deletion.

- Table never becomes completely full.

- Less clustering problem compared to open addressing.

- Works well even if load factor is greater than 1.

- Many standard library implementations of hash maps use chaining internally.

Disadvantages of Separate Chaining

- Extra memory needed for linked list pointers.

- Cache performance is not as good as open addressing.

- If load factor becomes very high, performance decreases.

So it is not perfect, but it is stable and reliable.

Test Yourself

Q1- A hash table of size m uses Separate Chaining. If the hash function distributes keys uniformly and n = m, what is the probability that a randomly chosen bucket is empty (approximately)?

0

e-1

1/2

1

Ans – (2)

Explanation –

When n = m, load factor α = 1. Under uniform hashing, probability a bucket is empty ≈ e-α = e-1 ≈ 0.367. Many students assume half buckets are empty, which is wrong.

Let us focus on one bucket only.

For one key, what is the probability that it does NOT go into this bucket? Since there are m buckets and distribution is uniform, probability that it goes into this bucket is 1/m.

So, probability that it does NOT go into this bucket is 1 − 1/m.

Now we insert n keys. Each key independently avoids that bucket with probability (1 − 1/m). So, probability that all n keys avoid that bucket is (1 − 1/m)n

This gives the probability that the bucket remains empty.

Now substitute n = m. We get (1 − 1/m)m.

As m becomes large, (1 − 1/m)m → e-1 and e-1 ≈ 0.367. So approximately 36.7% of buckets are empty even when n = m.

This surprises many students. They think “If n = m, table must be full.” But hashing does not distribute perfectly evenly. Some buckets get 2 elements. Some get 3. Some get 0.

Q2- In Separate Chaining, which operation is most sensitive to increasing load factor?

Hash computation

Memory allocation

Searching

Array indexing

Ans – (3)

Explanation – As α increases, chain length increases. Searching time increases linearly with α.

Q3- If we replace linked list in each bucket with a balanced BST, worst-case search becomes:

O(n)

O(log α)

O(α)

O(1)

Ans – (2)

Explanation – If each bucket uses balanced tree, worst case per bucket becomes O(log α). Java HashMap uses this idea.

Q4- If m is prime and hash function is uniform, Separate Chaining guarantees:

No collisions

Equal chain lengths

Expected constant time

Sorted buckets

Ans – (3)

Explanation – Uniform hashing gives expected O(1) performance.

Q5- If elements are inserted always at tail of linked list, insertion cost becomes:

O(α)

O(n)

O(log α)

O(1)

Ans – (1)

Explanation – We must traverse entire chain of length α to reach tail.

Q6- If α = 10, expected successful search comparisons are approximately:

1

6

10

20

Ans – (2)

Explanation – Successful search ≈ 1 + α/2 ≈ 6 comparisons roughly.

Q7- For a good hash function, expected chain length is:

n

m

log n

α

Ans – (3)

Explanation – Expected chain length equals load factor α.

Q8- Prove that worst-case Separate Chaining degenerates into linked list search.

Ans – If all keys hash to same bucket, table effectively becomes one linked list of size n. So operations become O(n).

Q9- Why is α often kept around 0.75 in practical systems?

Ans – Because performance remains close to constant time while memory waste is not too high. It balances speed and space.

Q10- Why is cache performance weaker in Separate Chaining?

Ans – Linked list nodes may be scattered in memory. CPU cache works better with contiguous memory. Therefore open addressing often has better cache locality.